Intel® Neural Compressor¶

An open-source Python library supporting popular model compression techniques on all mainstream deep learning frameworks (TensorFlow, PyTorch, ONNX Runtime, and MXNet)

Intel® Neural Compressor, formerly known as Intel® Low Precision Optimization Tool, is an open-source Python library that runs on Intel CPUs and GPUs, which delivers unified interfaces across multiple deep-learning frameworks for popular network compression technologies such as quantization, pruning, and knowledge distillation. This tool supports automatic accuracy-driven tuning strategies to help the user quickly find out the best quantized model. It also implements different weight-pruning algorithms to generate a pruned model with predefined sparsity goal. It also supports knowledge distillation to distill the knowledge from the teacher model to the student model. Intel® Neural Compressor is a critical AI software component in the Intel® oneAPI AI Analytics Toolkit.

Visit the Intel® Neural Compressor online document website at: https://intel.github.io/neural-compressor.

Installation¶

Prerequisites¶

Python version: 3.7, 3.8, 3.9, 3.10

Install on Linux¶

Release binary install

# install stable basic version from pypi pip install neural-compressor # or install stable full version from pypi (including GUI) pip install neural-compressor-full

Nightly binary install

git clone https://github.com/intel/neural-compressor.git cd neural-compressor pip install -r requirements.txt # install nightly basic version from pypi pip install -i https://test.pypi.org/simple/ neural-compressor # or install nightly full version from pypi (including GUI) pip install -i https://test.pypi.org/simple/ neural-compressor-full

More installation methods can be found at Installation Guide. Please check out our FAQ for more details.

Getting Started¶

Quantization with Python API¶

# A TensorFlow Example

pip install tensorflow

# Prepare fp32 model

wget https://storage.googleapis.com/intel-optimized-tensorflow/models/v1_6/mobilenet_v1_1.0_224_frozen.pb

from neural_compressor.config import PostTrainingQuantConfig

from neural_compressor.data.dataloaders.dataloader import DataLoader

from neural_compressor.data import Datasets

dataset = Datasets('tensorflow')['dummy'](shape=(1, 224, 224, 3))

from neural_compressor.quantization import fit

config = PostTrainingQuantConfig()

fit(

model="./mobilenet_v1_1.0_224_frozen.pb",

conf=config,

calib_dataloader=DataLoader(framework='tensorflow', dataset=dataset),

eval_dataloader=DataLoader(framework='tensorflow', dataset=dataset))

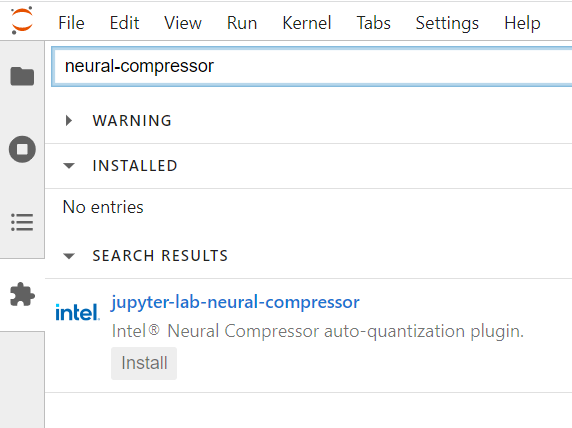

Quantization with JupyterLab Extension¶

Search for jupyter-lab-neural-compressor in the Extension Manager in JupyterLab and install with one click:

Quantization with GUI¶

# An ONNX Example

pip install onnx==1.12.0 onnxruntime==1.12.1 onnxruntime-extensions

# Prepare fp32 model

wget https://github.com/onnx/models/raw/main/vision/classification/resnet/model/resnet50-v1-12.onnx

# Start GUI

inc_bench

System Requirements¶

Validated Hardware Environment¶

Intel® Neural Compressor supports CPUs based on Intel 64 architecture or compatible processors:¶

Intel Xeon Scalable processor (formerly Skylake, Cascade Lake, Cooper Lake, Ice Lake, and Sapphire Rapids)

Intel Xeon CPU Max Series (formerly Sapphire Rapids HBM)

Intel® Neural Compressor supports GPUs built on Intel’s Xe architecture:¶

Intel Data Center GPU Flex Series (formerly Arctic Sound-M)

Intel Data Center GPU Max Series (formerly Ponte Vecchio)

Intel® Neural Compressor quantized ONNX models support multiple hardware vendors through ONNX Runtime:¶

Intel CPU, AMD/ARM CPU, and NVidia GPU. Please refer to the validated model list.

Validated Software Environment¶

OS version: CentOS 8.4, Ubuntu 20.04

Python version: 3.7, 3.8, 3.9, 3.10

| Framework | TensorFlow | Intel TensorFlow |

Intel® Extension for TensorFlow* |

PyTorch | Intel® Extension for PyTorch* |

ONNX Runtime |

MXNet |

|---|---|---|---|---|---|---|---|

| Version | 2.11.0 2.10.1 2.9.3 |

2.11.0 2.10.0 2.9.1 |

1.0.0 | 1.13.1+cpu 1.12.1+cpu 1.11.0+cpu |

1.13.0 1.12.1 1.11.0 |

1.13.1 1.12.1 1.11.0 |

1.9.1 1.8.0 1.7.0 |

Note: Set the environment variable

TF_ENABLE_ONEDNN_OPTS=1to enable oneDNN optimizations if you are using TensorFlow v2.6 to v2.8. oneDNN is the default for TensorFlow v2.9.

Validated Models¶

Intel® Neural Compressor validated the quantization for 10K+ models from popular model hubs (e.g., HuggingFace Transformers, Torchvision, TensorFlow Model Hub, ONNX Model Zoo) with the performance speedup up to 4.2x on VNNI while minimizing the accuracy loss. Over 30 pruning and knowledge distillation samples are also available. More details for validated typical models are available here.

Documentation¶

| Overview | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| Architecture | Workflow | APIs | GUI | ||||||

| Notebook | Examples | Results | Intel oneAPI AI Analytics Toolkit | ||||||

| Python-based APIs | |||||||||

| Quantization | Advanced Mixed Precision | Pruning(Sparsity) | Distillation | ||||||

| Orchestration | Benchmarking | Distributed Compression | Model Export | ||||||

| Neural Coder (Zero-code Optimization) | |||||||||

| Launcher | JupyterLab Extension | Visual Studio Code Extension | Supported Matrix | ||||||

| Advanced Topics | |||||||||

| Adaptor | Strategy | Distillation for Quantization | SmoothQuant (Coming Soon) | ||||||

Selected Publications/Events¶

NeurIPS’2022: Fast Distilbert on CPUs (Dec 2022)

NeurIPS’2022: QuaLA-MiniLM: a Quantized Length Adaptive MiniLM (Dec 2022)

Blog on Medium: MLefficiency — Optimizing transformer models for efficiency (Dec 2022)

Blog on Medium: One-Click Acceleration of Hugging Face Transformers with Intel’s Neural Coder (Dec 2022)

Blog on Medium: One-Click Quantization of Deep Learning Models with the Neural Coder Extension (Dec 2022)

Blog on Medium: Accelerate Stable Diffusion with Intel Neural Compressor (Dec 2022)

View our full publication list.

Additional Content¶

Hiring¶

We are actively hiring. Send your resume to inc.maintainers@intel.com if you are interested in model compression techniques.